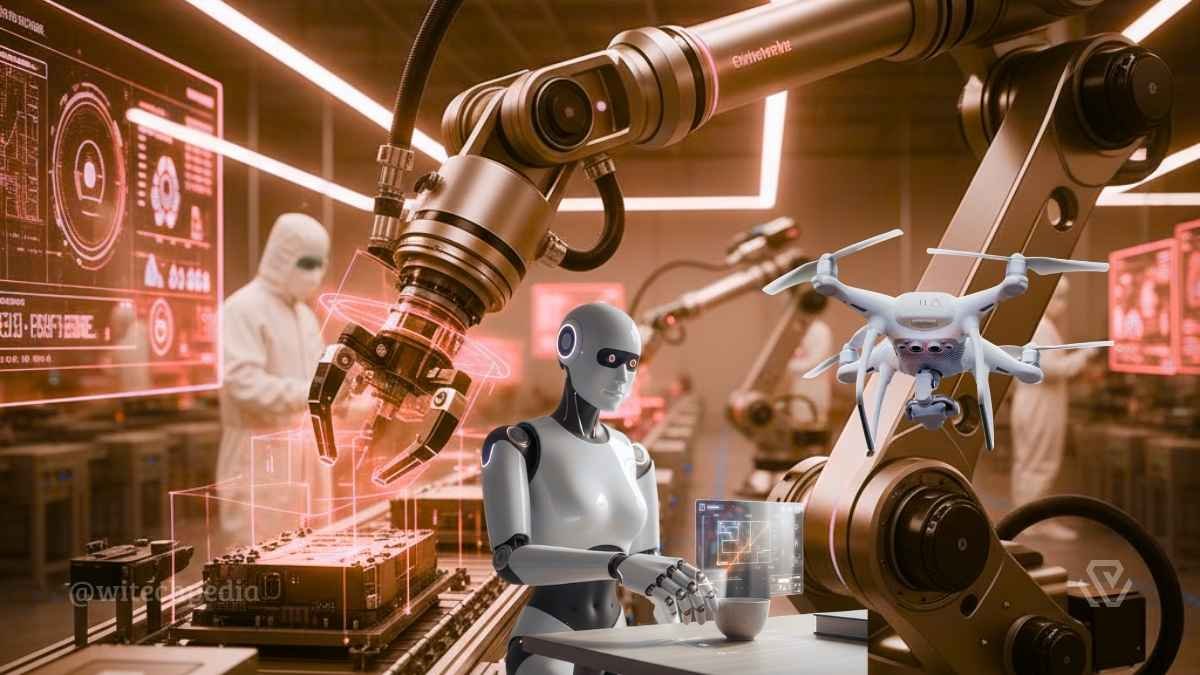

Physical AI refers to the next generation of artificial intelligence systems that move beyond digital interfaces to perceive, reason, and act within the physical world. Unlike traditional AI, which is confined to virtual environments (processing text or images for human consumption), Physical AI is “embodied”—it bridges the gap between digital “bits” and physical “atoms.” In 2026, this field has become the cornerstone of Agentic AI integration, where machines no longer just suggest actions but execute them autonomously across complex environments.

What is Physical AI? (Definition & Scope)

At its core, Physical AI is the intelligence that enables machines to understand the laws of physics, spatial relationships, and real-time causality. While traditional robotics relied on rigid, pre-programmed instructions, Physical AI utilizes foundation models—such as Vision-Language-Action (VLA) models—to adapt to unpredictable real-world scenarios.

The Evolution from Digital to Embodied AI

The transition to Physical AI marks the end of the “Chatbot Era” and the beginning of the “Action Era.” According to research from the Center for Security and Emerging Technology (CSET), this convergence of AI and robotics is as transformative as the introduction of the smartphone. It shifts the paradigm from task-specific automation to generalizable physical reasoning.

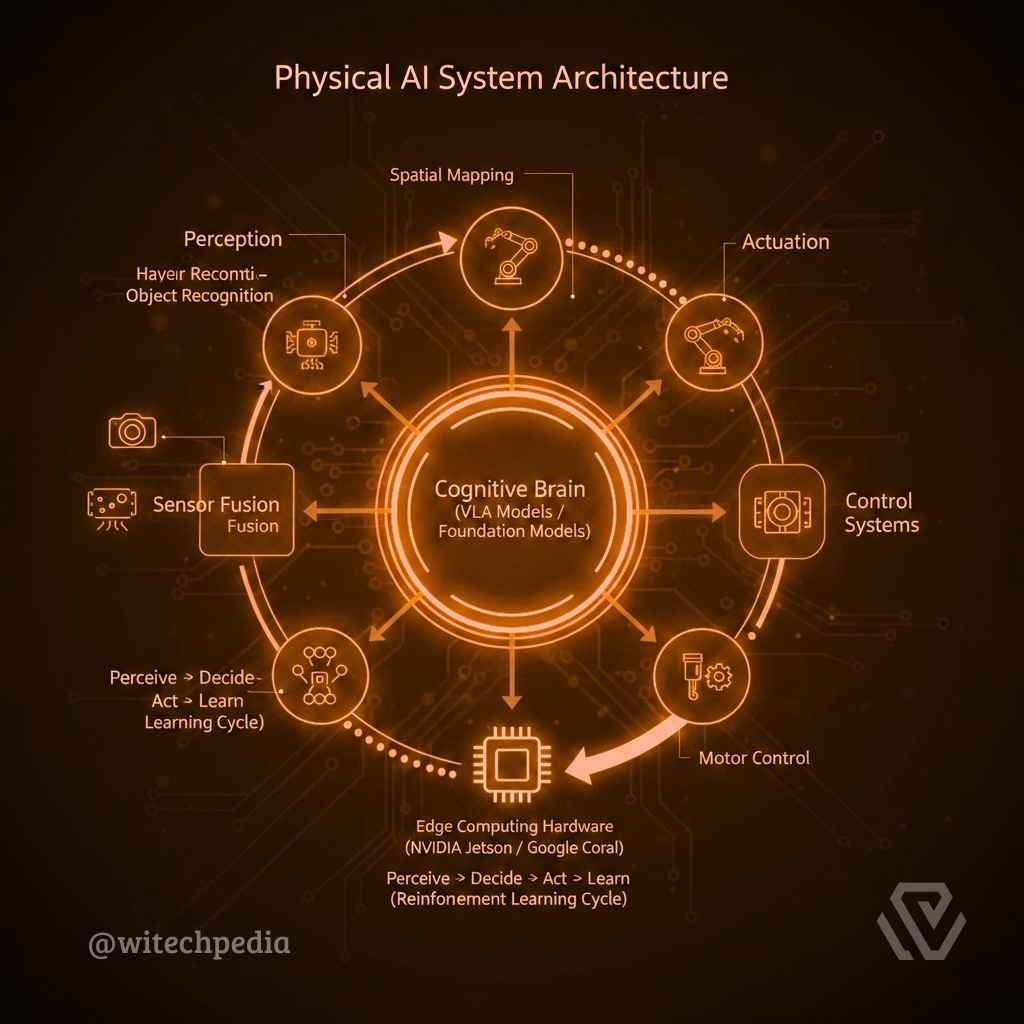

Core Components of Physical AI Systems

To function in the real world, a Physical AI system requires a tightly coupled architecture often referred to as the “Perceive-Decide-Act-Govern” loop.

Sensory Perception & Spatial Intelligence

Physical AI depends on multimodal sensor inputs to build a high-fidelity “World Model.”

- LiDAR and Computer Vision: Essential for 3D spatial mapping and object recognition.

- Sensor Fusion: The integration of data from cameras, radar, and haptic sensors to ensure the system understands its environment even in low-visibility or complex terrains.

- Spatial Intelligence: The ability of an AI to reason about 3D space, an area pioneered by companies like NVIDIA via their Omniverse platform.

The Cognitive “Brain” & VLA Models

The “Brain” of Physical AI consists of Vision-Language-Action (VLA) models. These models allow a robot to receive a command in natural language (e.g., “Sort these fragile items”), perceive the items through vision, and translate that intent into precise motor control (Action).

Actuation & Edge Computing

Because real-world actions require millisecond-level precision, Physical AI often runs on Edge Computing hardware. High-performance AI chips, such as the NVIDIA DGX or specialized analog AI chips from IBM Research, allow for local processing, reducing the latency that could lead to physical accidents.

Key Applications of Physical AI in 2026

The global market for Physical AI is projected to reach $1 trillion by 2030, driven by its expansion across critical industries.

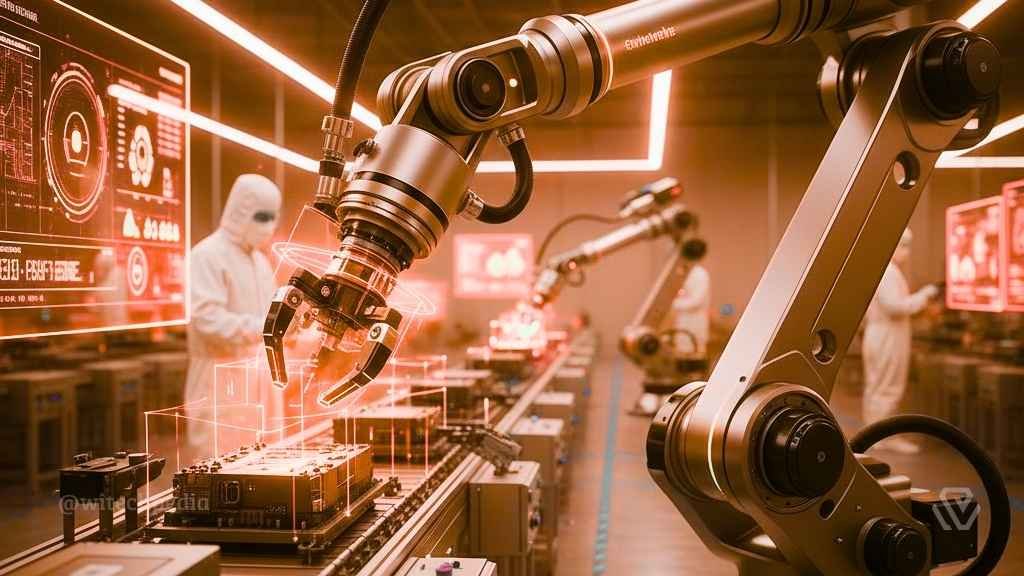

- Smart Manufacturing (Industry 5.0): Collaborative robots (cobots) use Physical AI to work alongside humans, handling variability in assembly lines that previously required manual labor.

- Autonomous Mobility: Beyond self-driving cars, Physical AI powers autonomous drones and warehouse fleets that manage logistics and supply chains with minimal human intervention.

- Healthcare Robotics: Systems like the Ottava surgical robot use physical intelligence to perform minimally invasive procedures with superhuman precision.

- Smart Spaces: Large-scale environments like airports and “Dark Warehouses” utilize fixed camera networks and Physical AI agents to optimize pedestrian flow and cargo movement.

Technical Challenges: The Sim-to-Real Gap

One of the primary hurdles in Physical AI development is the “Sim-to-Real” gap. While an AI can be trained millions of times in a physics-based simulation (like NVIDIA Isaac Sim), the real world contains “noise”—friction, gravity fluctuations, and wear-and-tear—that is difficult to simulate perfectly.

- Physics-Informed Neural Networks (PINNs): These are increasingly used to bake the laws of physics directly into AI training data.

- Data Scarcity: Unlike text data, physical interaction data is expensive and time-consuming to collect, leading to a rise in Synthetic Data Generation.

Ethics, Safety, & Governance

In Physical AI, the stakes are higher; a “hallucination” in the physical world can result in property damage or injury.

- Built-in Governance: Modern systems include “Kill Switches,” real-time observability platforms, and policy engines to ensure adherence to safety standards like the EU AI Act.

- AI Cybersecurity: As robots become internet-connected agents, protecting them from adversarial attacks is a critical priority. (See our guide on AI Cybersecurity Threats in 2026).

Frequently Asked Questions (FAQ)

How is Physical AI different from a standard robot?

A standard robot follows pre-set scripts (e.g., “move arm to X, Y, Z”). Physical AI perceives a changing environment and decides how to move based on its understanding of the world.

What are some examples of Physical AI today?

Tesla’s Optimus humanoid, Amazon’s Proteus warehouse robots, and autonomous drone delivery fleets are all primary examples of Physical AI in action.

Is Physical AI related to AGI?

Many researchers, including those at MIT News, believe that true Artificial General Intelligence (AGI) cannot be achieved without embodiment, as intelligence requires interacting with the physical world to understand causality.

For more deep dives into the technology of tomorrow, visit the WiTechPedia AI & ML Wiki.