When developers first hear the term “serverless,” the immediate reaction is often confusion—after all, the code has to run on something. However, serverless computing architecture doesn’t mean the absence of servers. It means that as a developer, you no longer have to provision, manage, patch, or scale them. By abstracting away the entire infrastructure layer, modern cloud providers allow you to focus 100% of your time on writing business logic.

- What is Serverless Computing Architecture? (FaaS Explained)

- How Serverless Architecture Works (Under the Hood)

- Serverless vs. Microservices vs. Monolithic Architecture

- The Anatomy of a Modern Serverless Stack

- The Notorious “Cold Start” Problem (And How to Fix It)

- Top Serverless Providers in 2026

- Real-World Use Cases for Serverless Architecture

- Pros and Cons: Is Serverless Right for Your Next Project?

- Frequently Asked Questions (FAQ)

- Final Thoughts

In this complete guide, we will break down how Function-as-a-Service (FaaS) works, explore event-driven execution, and help you decide if a serverless stack is the right choice for your next cloud and data project.

What is Serverless Computing Architecture? (FaaS Explained)

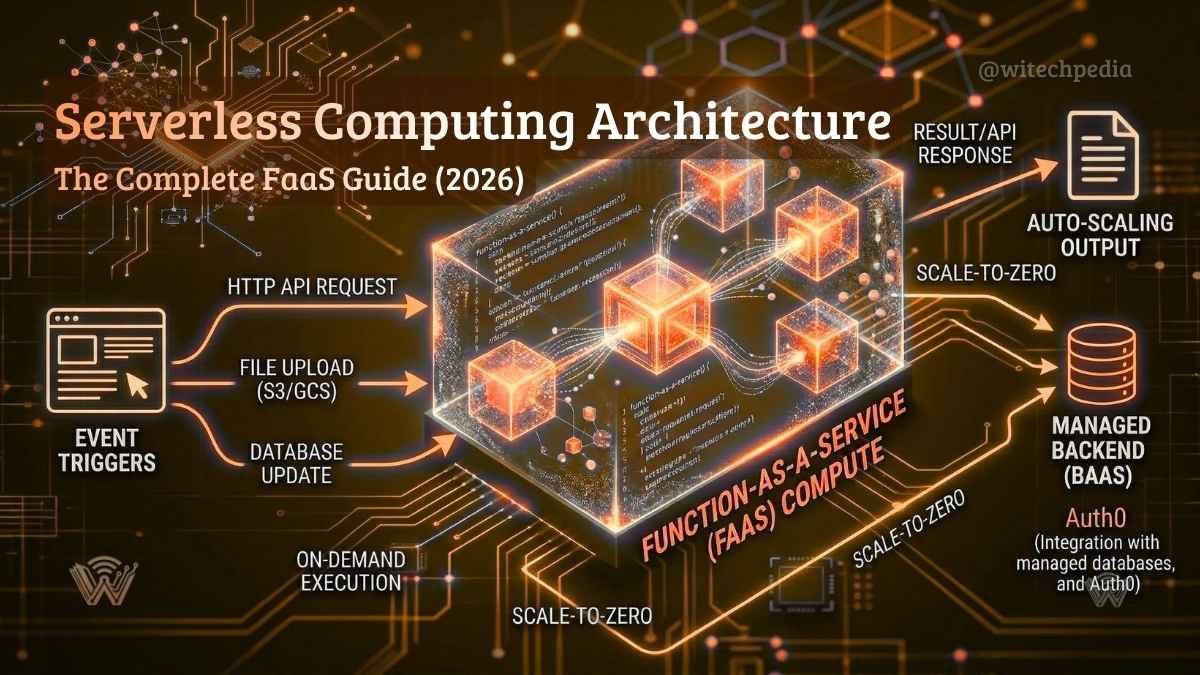

At its core, serverless computing is an execution model where the cloud provider (like AWS, Google Cloud, or Azure) dynamically allocates machine resources on demand. You write discrete blocks of code—called functions—and deploy them to the cloud.

These functions are strictly event-driven and stateless. They sit completely dormant (costing you nothing) until a specific trigger wakes them up. Once triggered, the cloud provider spins up an ephemeral compute container, executes the code, returns the result, and immediately destroys the container.

How Serverless Architecture Works (Under the Hood)

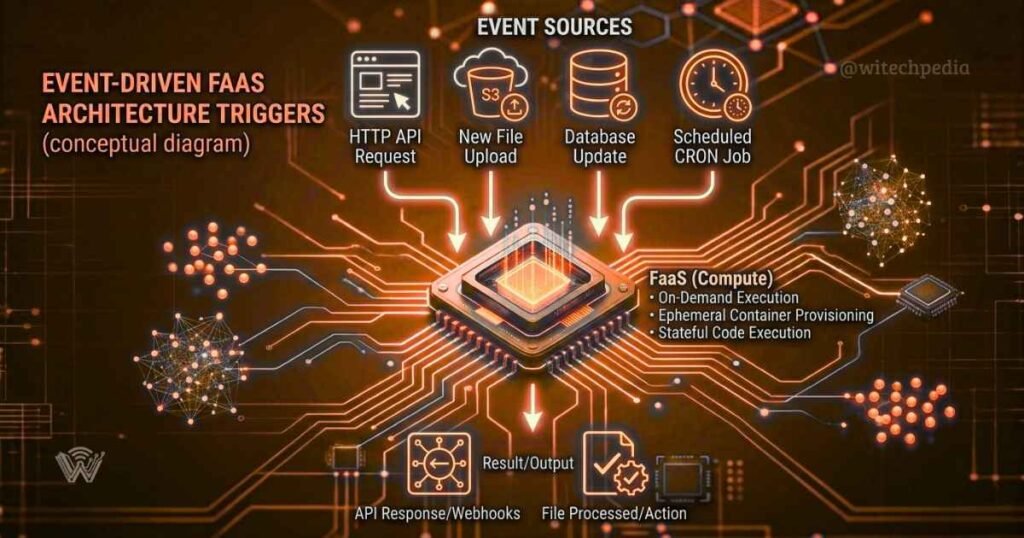

To truly understand serverless, you need to look at its two fundamental mechanisms: triggers and auto-scaling.

1. Event-Driven Execution

Serverless functions do not run continuously like a traditional Node.js or Python backend. They are strictly reactive. Common events that trigger a FaaS include:

- An HTTP request routed through an API Gateway.

- A new file or image uploaded to cloud storage (like an AWS S3 bucket).

- A database modification (e.g., a new user row added to a database).

- A scheduled CRON job executing at a specific time.

2. Ephemeral Compute and True Auto-Scaling

If your website suddenly goes from 10 visitors to 10,000 visitors in a minute, traditional servers will crash unless you have complex load balancers configured. A serverless architecture automatically creates 10,000 parallel, isolated containers to handle each request simultaneously. When the traffic spike ends, it scales back down to zero instantly.

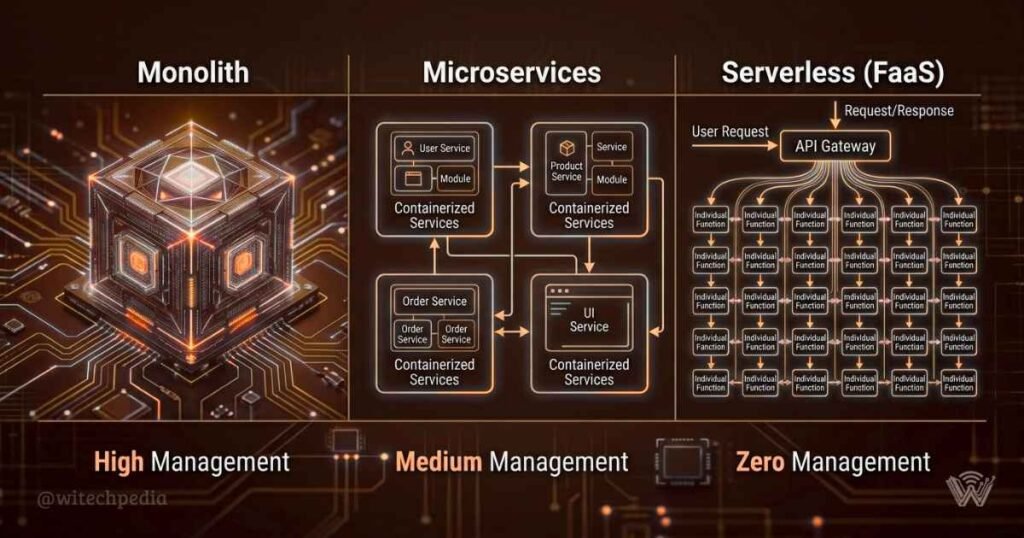

Serverless vs. Microservices vs. Monolithic Architecture

How does this compare to traditional backends? Let’s break down the evolution of backend infrastructure.

| Feature | Monolith | Microservices (Containers) | Serverless (FaaS) |

| Deployment | Entire application deployed at once | Deployed as independent containerized services | Deployed as individual, single-purpose functions |

| Scaling | Scales the entire app (inefficient) | Scales specific services (better) | Scales per individual execution (perfect) |

| Pricing | Pay for 24/7 uptime | Pay for 24/7 container uptime | Pay only for exact execution time (milliseconds) |

| Management | High DevOps overhead | High DevOps & orchestration (Kubernetes) | Zero infrastructure management |

The Anatomy of a Modern Serverless Stack

Building a serverless application requires piecing together several managed cloud services:

- FaaS (Compute): This is where your custom code lives. It handles the heavy lifting, data processing, and logic.

- API Gateway: The “front door” of your application. It receives HTTP requests from your frontend (like a React or Vue app) and routes them to the correct serverless function.

- BaaS (Backend-as-a-Service): Serverless architectures rely heavily on managed third-party services. Instead of building your own authentication or database, you might use Auth0 for logins and a managed Vector Database (like Pinecone) or a NoSQL database (like DynamoDB) for storage.

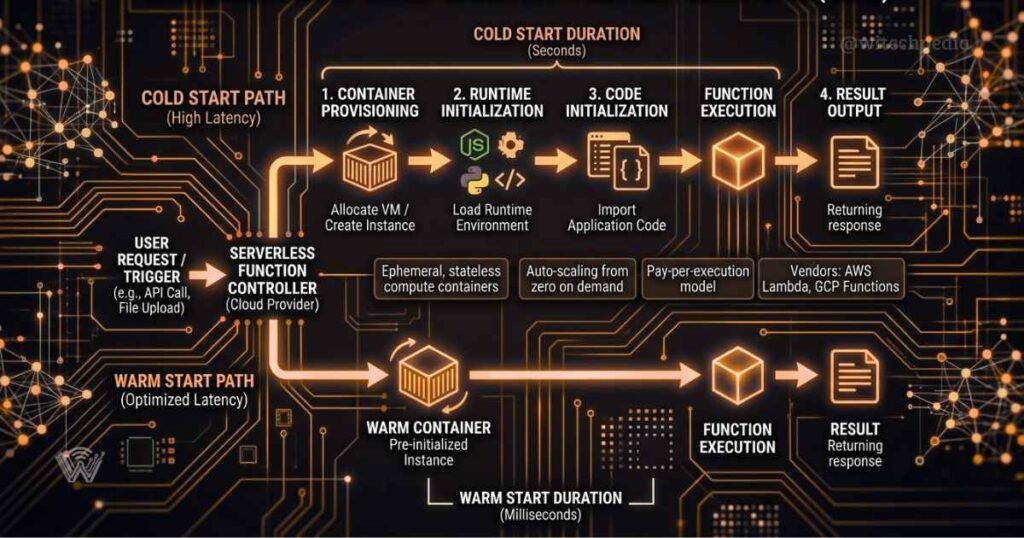

The Notorious “Cold Start” Problem (And How to Fix It)

As a full-stack developer, the biggest drawback you will encounter with serverless is the cold start.

Because serverless containers are spun down when not in use, the very first request after a period of inactivity takes longer to process. The cloud provider has to allocate resources, load the runtime environment, and deploy your code before it can execute. This can add anywhere from 200 milliseconds to over a second of latency.

How to mitigate cold starts in 2026:

- Use Provisioned Concurrency: Services like AWS Lambda allow you to pay a small premium to keep a baseline number of containers “warm” and ready to execute instantly.

- Optimize Package Size: Only import the specific libraries your function needs. A lighter codebase boots faster.

- Choose the Right Language: Compiled languages (like Go or Rust) and lightweight runtimes (like Node.js) generally boot much faster than heavy Java or C# environments.

Top Serverless Providers in 2026

If you are ready to build, these are the industry standards:

- AWS Lambda: The pioneer of FaaS. It has the largest ecosystem and integrates flawlessly with Amazon’s massive suite of tools.

- Google Cloud Functions: Ideal for developers deeply embedded in the GCP ecosystem, especially when triggering functions via Firebase or BigQuery.

- Edge Compute (Cloudflare Workers / Vercel): The modern evolution of serverless. Instead of running in a centralized data center, the code runs on CDN edge nodes physically closest to the user, resulting in zero cold starts and blazing-fast response times.

Real-World Use Cases for Serverless Architecture

When developing digital services and web applications, serverless shines in specific scenarios:

- Data and Media Processing: Automatically triggering a function to compress a video or generate a thumbnail the moment a user uploads a file.

- Scalable REST APIs: Building robust backends for web and mobile apps that need to handle unpredictable spikes in traffic without crashing.

- Automated CRON Jobs: Running nightly database cleanups, generating daily SEO reports, or sending batch emails without needing a dedicated server running 24/7.

Pros and Cons: Is Serverless Right for Your Next Project?

The Pros:

- Zero infrastructure management or patching.

- Infinite, automatic scalability.

- Highly cost-effective for APIs with variable traffic (you pay zero when traffic is zero).

The Cons:

- Vendor lock-in (migrating AWS Lambda code to Azure Functions requires refactoring).

- Difficult local debugging and testing.

- Cold start latency can impact highly sensitive real-time applications.

Frequently Asked Questions (FAQ)

Is serverless cheaper than traditional hosting?

For applications with variable or unpredictable traffic, serverless is significantly cheaper because you only pay for compute time by the millisecond. However, for applications with a constant, massive stream of 24/7 heavy traffic, a dedicated traditional server or provisioned container might be more cost-effective.

What languages can I use in serverless computing?

Major providers like AWS Lambda natively support Node.js, Python, Ruby, Java, Go, and .NET. You can also use custom runtimes to execute virtually any programming language.

Can a serverless function connect to a SQL database?

Yes, but you must be careful. Because serverless functions scale infinitely, thousands of concurrent functions can quickly exhaust the connection pool of a traditional relational database (like MySQL or PostgreSQL). It is highly recommended to use connection pooling proxies (like Amazon RDS Proxy) or managed NoSQL/Serverless databases when building a FaaS backend.

Final Thoughts

Serverless computing architecture has matured from a niche cloud offering into the default standard for deploying agile, scalable applications. By eliminating the DevOps overhead of provisioning and maintaining servers, development teams can ship features faster and build highly resilient systems. Whether you are building an AI-powered data pipeline or a simple REST API, understanding FaaS is a mandatory skill for modern software engineers.