In 2026, the digital landscape has shifted from a battle of human wits to an AI-driven arms race. While artificial intelligence has revolutionized productivity, it has simultaneously equipped threat actors with automated, scalable, and highly sophisticated tools. For businesses and security professionals, understanding AI cybersecurity threats 2026 is no longer optional—it is the foundation of digital survival.

In this guide, we break down the most dangerous AI-powered attacks and the proactive cybersecurity strategies required to defend against them.

Emerging AI Cybersecurity Threats: The Rise of Automated Attacks

The era of “obvious” phishing emails is over. Today’s attackers leverage Large Language Models (LLMs) and generative media to bypass traditional filters.

1. AI-Powered Phishing and Generative Scams

Modern AI-powered phishing campaigns use real-time data scraping to craft hyper-personalized messages. These LLMs mimic a company’s internal tone and reference actual recent projects. According to recent Google Cloud Cybersecurity Forecasts, AI-enhanced social engineering has seen a 40% higher success rate than traditional methods.

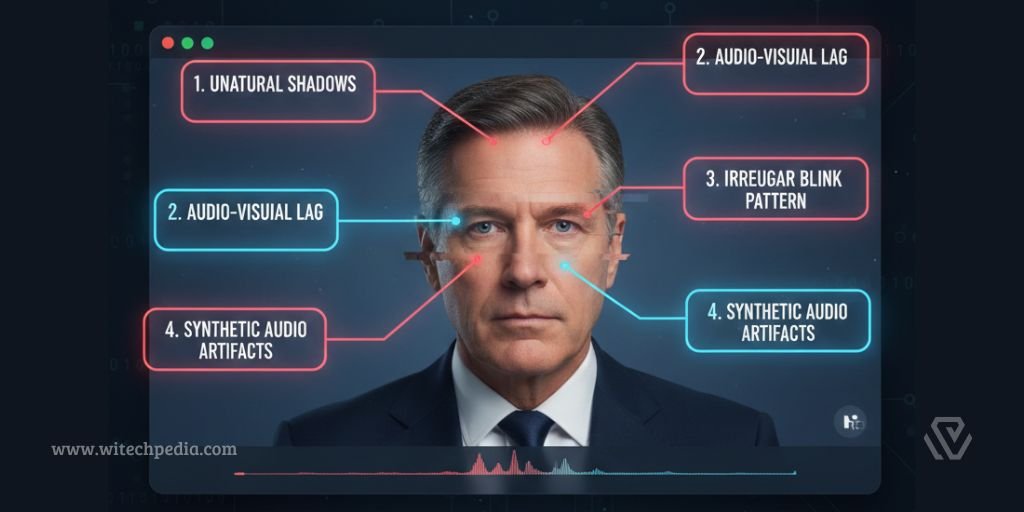

2. Deepfake Phishing and Social Engineering

The most alarming trend in 2026 is the use of real-time voice and video cloning. Attackers now impersonate C-suite executives during live video calls. As we noted in our Google Gemini Review (2026), while LLMs are incredible tools for creativity, their ability to generate synthetic media makes them a primary driver of AI cybersecurity threats.

3. Automated Malware Evolution

We are seeing the rise of polymorphic AI malware. This code uses embedded machine learning to analyze the victim’s environment and rewrite its own signature in real-time. This allows it to slip past traditional antivirus software that relies on static databases.

Targeted AI System Vulnerabilities: Data Poisoning and Noise

It isn’t just that attackers use AI; they are also targeting the AI models that businesses rely on, creating unique vulnerabilities in AI systems.

- Data Poisoning: By injecting malicious data into a company’s training set, hackers create “blind spots” in security models. The NIST AI Risk Management Framework highlights this as a critical concern for firms.

- Adversarial AI Attacks: Hackers can add invisible “digital noise” to files. To a human, the file looks normal, but to a security AI, the noise triggers a misclassification, allowing malware to be labeled as “safe.”

Effective AI Defense Mechanisms: Turning AI into a Shield

To counter these threats, organizations must move away from reactive “firewall” thinking and toward proactive AI defense mechanisms.

1. AI Threat Detection and Anomaly Analysis

Instead of looking for known viruses, Machine Learning for threat detection now focuses on behavior. AI manages this at scale across complex Internet of Things (IoT) networks, identifying compromised devices before they can infect the main server.

2. Cybersecurity Strategies 2026: Zero-Trust and AI

In 2026, the mantra is “Never Trust, Always Verify.” A Zero-Trust AI architecture—built on standards like NIST SP 800-207—continuously authenticates every user. If a system failure occurs, manual intervention using essential CMD commands remains a vital fallback for recovery.

3. Quantum-Safe Data Protection in the AI Era

As we move closer to the “Q-Day” threat to encryption, AI is being used to manage the transition to post-quantum cryptography. You can learn more about this in our deep dive into Quantum Computing in 2026.

2026 Case Study: Thwarting a Deepfake CEO Fraud

In early 2026, a major financial institution was targeted by a “vishing” (voice phishing) attack. An attacker used a high-fidelity AI voice clone of the CFO to request an emergency $5 million transfer.

The Defense: The bank’s AI-driven communication tool detected “synthetic artifacts” in the audio—micro-frequencies that the human ear cannot hear. The system flagged the call, triggered a secondary biometric check, and thwarted the theft.

Implementation Guide: Deploying AI Cybersecurity Strategies

- Risk Assessment: Audit your current AI dependencies and potential AI cybersecurity threats.

- Deploy AI-Driven Firewalls: Invest in tools that offer real-time anomaly detection.

- Staff Training: Educate employees on the existence of video and voice deepfakes.

- Continuous Monitoring: Use analytics to track your data protection AI health.

Frequently Asked Questions (FAQ)

What are the biggest AI cybersecurity threats for small businesses?

Small businesses are primary targets for automated phishing and ransomware that uses AI to find unpatched vulnerabilities faster than a human could.

How do AI defense mechanisms work to improve response times?

AI can analyze millions of data points per second, identifying and isolating a breach in milliseconds—tasks that would take a human security team hours.

What are the best cybersecurity strategies for 2026?

Focus on proactive AI integration, zero-trust architecture, and employee education regarding deepfake technology.